Data Model Verification to Improve the Quality of Your Data Warehouse Schema

What does it take to ensure the quality and robustness of your data warehouse implementation? An in-depth data model verification system that allows you to thoroughly check your source and destination models and help fix them before they are ever deployed. Because if your data warehouse schema is accurate, the subsequent data loading and reporting processes will automatically be streamlined and error-free.

Data conceptual models serve as the foundation of any data warehouse architecture that is designed to deliver trusted data for reporting and analytics. Being the very core of such incredible machinery (data warehouse), it only makes sense to ensure that the data models, when put to use, work smoothly without giving any errors or disrupting the development process.

Image Credits: Geek & Poke

But building data models is rarely straightforward. Some would say it’d be a dream come true for data modelers who want their models to work like a charm the first time developers use them for data loading processes. So how can you, as a modeler, achieve this nirvana of complete trust and confidence in your data models and skip the lengthy testing cycles?

The answer lies in performing comprehensive data model validation checks during the design time and right before they are deployed.

Let’s take a deep dive into what is meant by data model verification and how it can impact the overall timeline and quality of your data warehouse project.

What Makes Data Model Verification a DW Essential

Catching those data model errors at design time is essential

When creating the data warehouse schema, data modelers have to be careful about a lot of finer details for their data models to run smoothly in the subsequent processes. To give you an idea, let’s have a look at two major system data modeling phases that would greatly benefit from proper verification checks.

1. Design-Time Verification Checks

Data models are built with reference to your source and destination databases. You need to make sure that your models meet certain rules, statistical tests, and standards of these databases and have no logical errors.

From the source data modeling perspective, different types of verification validations involve looking for field-level mismatches between the model and the source database. These range from differences in data types, indexes, aliases, column and table names, all the way down to character lengths, the nullability of columns, primary keys, and missing fields in the database.

In essence, if the database tables and source data model are not in sync, you’ll get errors at a much later stage when you are using the data models for building data pipelines and mapping fields.

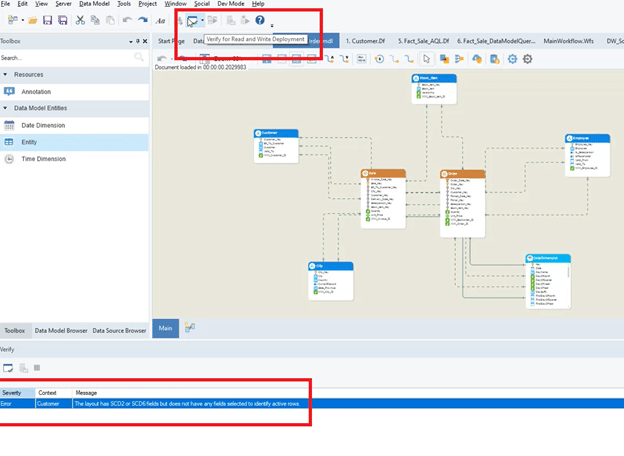

Let’s talk about building the destination schema, which could be 3NF, dimensional model, or data vault. For the sake of this discussion, we’ll use dimensional modeling (because of its popularity among the masses). It requires you to configure facts and dimensions by defining business and surrogate keys, transaction date keys, SCD roles, row identifiers, null value checks and a few other specifications.

Let’s take the example of an Employee dimension. You want to maintain history of various aspects of employees, like when they were promoted, the change in salaries, the date they were recruited and left the company, etc. For this, you’ll need to use either SCD Type 2 or Type 6. In addition, you have to ensure that business and surrogate keys are assigned, relationships are established, and one row identifier is defined.

Missing surrogate key error in Employee dimension with complete context

So, what will happen if one or more pieces of the puzzle are missing? Let’s say the surrogate key hasn’t been defined.

When you execute the data pipeline for the first time, it will insert the data normally. When To fix this, the ETL developers will have to communicate this issue to the data modeling team, who will then have to go and find that specific dimension with the missing surrogate key to fix it. Again, all this rework would be a long process.

2. Verification Checks for Ensuring Compliance with the Destination Database

After you’ve built the data warehouse schema, the next step is to replicate it in the target database. At this point, you need to ensure that your schema’s script complies with the destination database provider, which could be Snowflake, Oracle, Azure, or Teradata.

To put this into perspective, let’s take the example of Snowflake and how it doesn’t use indexes. So if your source system is SQL Server, which supports all different kinds of indexing options (primary key, clustered, non-clustered, etc.), Snowflake will give an error that indexing is not supported at runtime.

With a data model validation module in place, you can easily validate a model and test data models’ quality and integrity before they are either deployed on the target database or used for populating the data warehouse. It saves teams a lot of development hours that would otherwise be spent in redoing the data model design and rebuilding the data pipelines.

Does Your Data Modeling Team Need a Verification System?

They most certainly do.

Let’s talk about the sheer benefits data warehousing teams enjoy with having a competent data model verification system in place.

Pinpoints Where the Errors and Warnings Exactly Are

Consider you have about 60 tables in your OLTP database from which you have created a source data model. Some tables could have well over 30 fields. Now when you are trying to load data in these tables, your database provider gives the error that certain data types and character lengths are not supported and must be changed. It is likely that you and your fellow data modelers have to spend hours on end to scour through the models, check each entity, and correct all these errors.

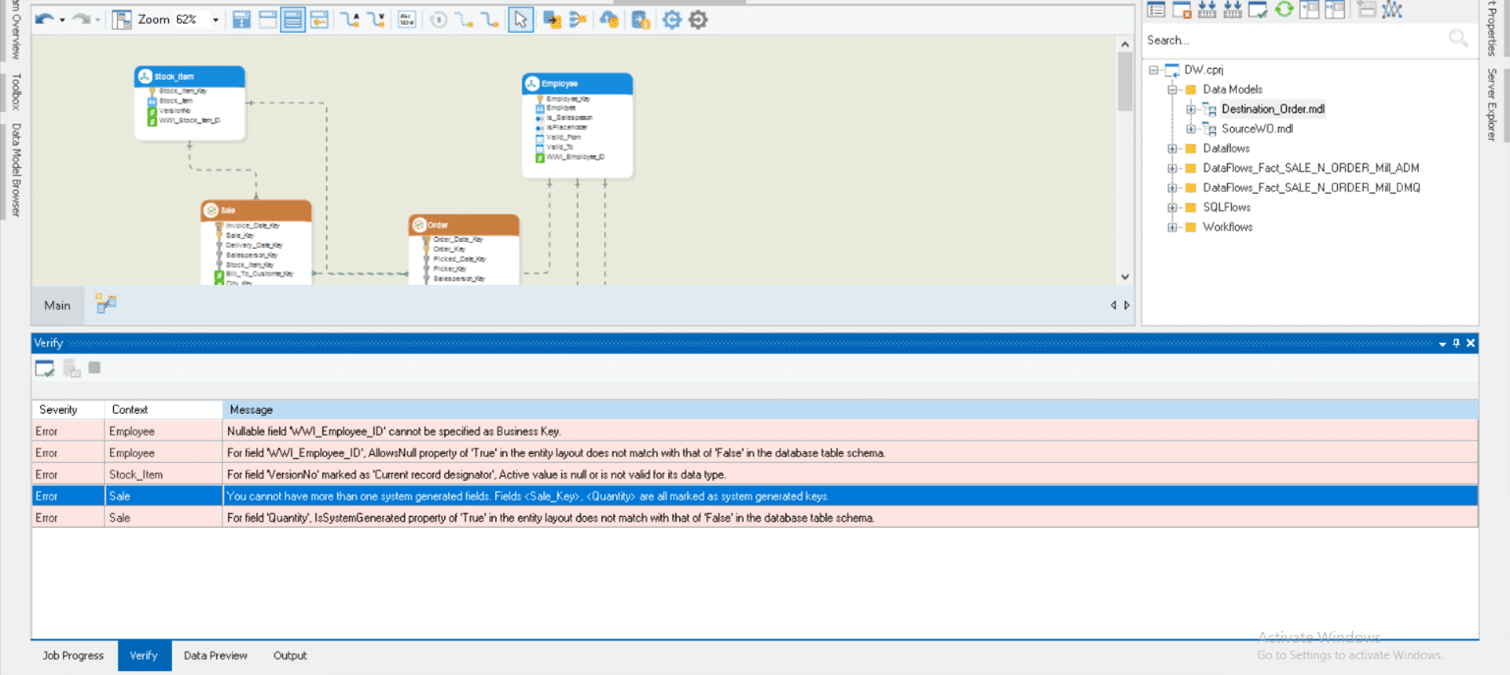

Some common errors in data models

Some common errors in data models

Having a data model verification tool helps identify all the errors and warnings instantly and provides a complete context of where they are. It practically takes out the guesswork from testing your data models and directs you right where you need to perform fixes in your schema with an iterative process.

Saves from Going Back and Forth Between Teams

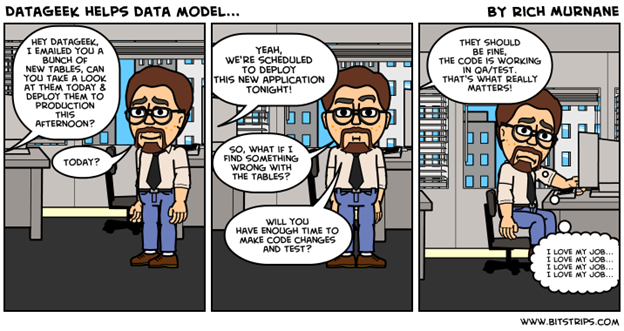

Image Credits: Rich Murnane’s Blog

In a typical setting, data warehousing projects have multiple teams handling various aspects of the data warehouse. There will be data modelers, DBAs, ETL developers, data architects, and several others.

The data modeling team works with the business users to determine their reporting needs, consults with DBAs to get provider specifications, builds and deploys the models, and finally gives the green signal to the ETL developers to start building pipelines for loading data.

Now, even with a data warehousing tool, it is a labor-intensive process. But the errors generally don’t show up until you reach the point of executing data pipelines for populating the data warehouse.

An in-depth data model verification system saves users from going back to the starting line, making changes to the data model, handing it over to the next team, and then running the data loading flows to check if it works fine. By making the fixes as you design the data models, you can streamline the process dramatically, speeding up the entire development cycle by hours, if not days or weeks.

Ensures Compliance with the Database Provider’s Scripting Rules

Let’s say your OLTP database is in SQL Server, and you are building the data warehouse in Oracle. When deploying your schema on an Oracle database, you are likely to encounter issues with restrictions in data types and character lengths and scale as both providers handle these parameters differently. Similarly, you might get an error on having a clustered index in your destination model, which, again, Oracle doesn’t support.

With a data model verification system in place, you can easily resolve these compliance issues well before deploying the schema on the Oracle database. This saves both data modelers and ETL developers many hours-worth of development rework that would otherwise go into identifying the issues and resolving them in each data model entity.

Speeds Up the Overall Data Warehousing Process

“Man, if only there were a system to test these data models and notified me of its errors right away, we would’ve saved tons of development hours gone in fixing them again and again.”

~Anonymous, Wishful Data Modeler

An effective data model verification system can be the holy grail for data warehousing teams as it runs hundreds of checks both at the design time and deployment time to ensure your data models are stable. It allows testing the integrity and accuracy of the models based on the reporting requirements and ensures that it meets the scripting standards of the respective database provider.

With everything in order before the data models are deployed and handed over to the next team, organizations can benefit from significant time savings, leading to a reduced project timeline.

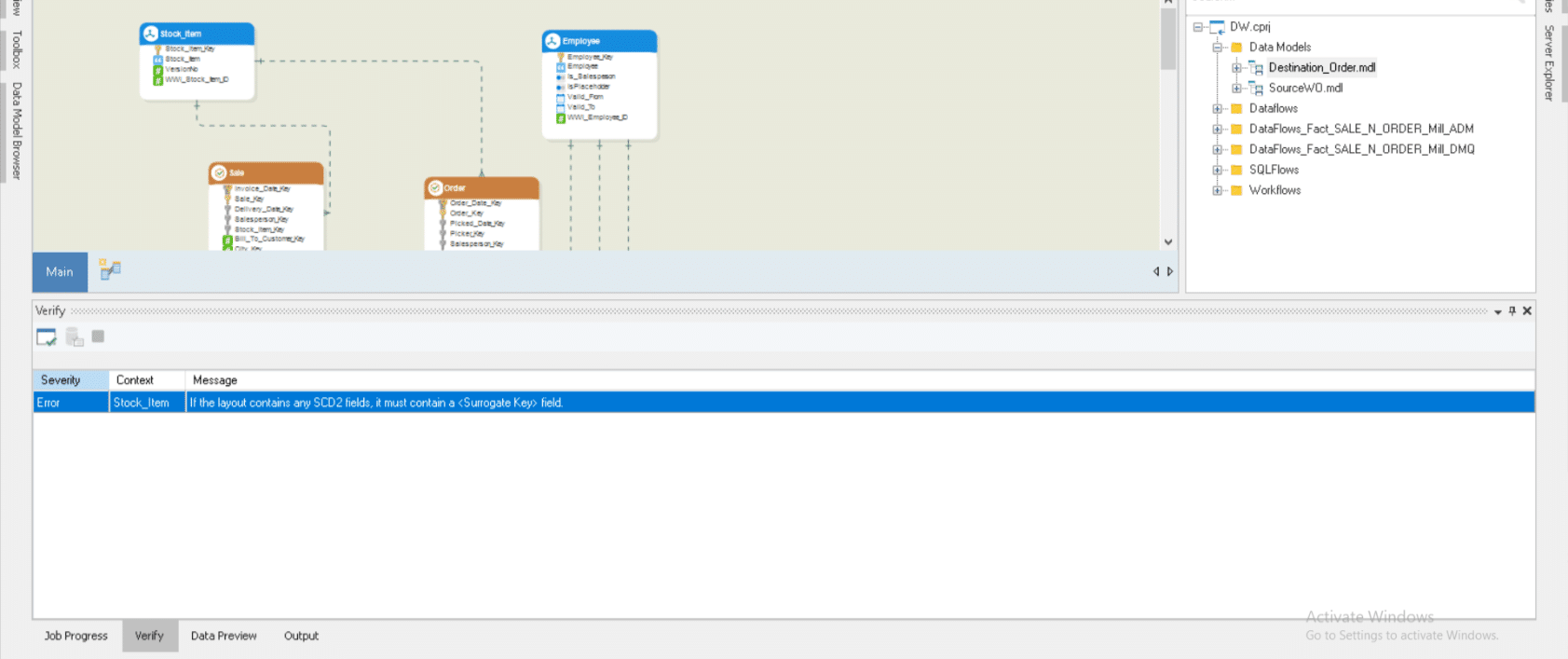

Leverage One-Click Data Model Verification with Astera DW Builder

The above discussion shows that a data model verification system is a vital core for the data modeling process and helps streamline the processes across the data warehouse lifecycle.

Astera DW Builder is an agile, metadata-driven solution that allows you to design, deploy, and test data models and utilize them for building data load pipelines, all from a single platform. It comes packed with an in-depth data model verification module that stands guard at the deployment time and ensures no error or warning goes past unchecked by the user.

With just a single click, the platform automatically provides you with a picture of the health of your data models. From checking for missing business keys, relationship anomalies, and mismatched data types to ensuring compliance with the target database’s scripting standards, you can count on Astera DW Builder to identify and report all possible errors and warnings. This ensures that your data models are stable and complete before moving to the project’s next phase.

Intrigued and want to see how you can validate your data models and streamline the entire data warehousing process? Check out the product demo, or take it out for a spin to see for yourself with a free 14-day trial.