What is a data pipeline?

A data pipeline is a set of processes and methods used to move data from different source systems into a centralized repository, usually a data warehouse or a data lake, for analysis and further use.

It streamlines the flow of data from source systems, transforms data to align it with the schema of the target system, and loads it into a data warehouse. While data undergoes processing before it moves into the destination system, it does not always require transformation, especially if it flows into data lake.

Data scientists and analysts use data pipelines to prepare data for various initiatives, such as feature engineering or feeding it into machine learning models for training and evaluation. Business users leverage a data pipeline builder—a no-code/low-code GUI based tool—to build their own pipelines without relying on IT.

What is a big data pipeline?

The concept of managing large volumes of data has been around for decades, but the term “big data” gained popularity in the mid-2000s as the volume, velocity, and variety of data being generated started to increase dramatically. With technologies like social media, mobile devices, IoT devices, and sensors becoming more common, organizations began to realize the potential value of harnessing and analyzing vast amounts of data. However, to process data at such a scale, businesses need an equally capable data pipeline—a big data pipeline.

A big data pipeline refers to the process of collecting, processing, and analyzing large volumes of data from disparate sources in a systematic and efficient manner. Like a traditional data pipeline, it involves several stages, including data ingestion, storage, processing, transformation, and analysis. A big data pipeline typically utilizes distributed computing frameworks and technologies, given the need to handle data at a massive scale.

How have data pipelines evolved?

Data pipelines have come a long way over the past four decades. Initially, data scientists and engineers had to manually extract, transform, and load (ETL) data into databases. These processes typically ran on a scheduled basis, usually once a day, for data ingestion and processing, making them time-consuming and prone to errors.

With the proliferation of internet-connected devices, social media, and online services, the demand for real-time data processing surged. Traditional batch processing pipelines were no longer sufficient to handle the volume and velocity of incoming data. Evolving with time, these pipelines became more flexible, facilitating data movement from cloud sources to cloud destinations, such as AWS and Snowflake.

Today, they focus on ingesting data, particularly real-time data, and making it available for use as quickly as possible, making workflow automation and process orchestration all the more important. As such, modern data pipeline tools now also incorporate robust data governance features, such as:

Data pipeline architecture

A data pipeline architecture refers to the structure and design of the system that enables the flow of data from its source to its destination while undergoing various processing stages. The following components make up the data pipeline architecture:

- Data sources: A variety of sources generate data, such as customer interactions on a website, transactions in a retail store, IoT devices, or any other data-generating sources within an organization.

- Data ingestion layer: This layer establishes connections with these data sources via appropriate protocols and connectors to retrieve data. Once connected, relevant data is extracted from each source. The business rules define whether entire datasets or only specific data points are extracted. The method of extraction depends on the data source format—structured data can be retrieved using queries, while unstructured data mostly requires specialized data extraction tools or techniques.

- Data storage layer: The ingested data is in raw form and, therefore, must be stored before it can be processed.

- Data processing layer: The processing layer includes processes and tools to transform raw data.

- Data delivery and analytics layer: The transformed data is loaded into a data warehouse or another repository and made available for reporting and data analytics.

Read more about the data pipeline architecture.

Types of data pipelines

There are multiple types of data pipelines, each catering to different usage scenarios. Depending on the need and infrastructure, businesses can deploy data pipelines both on-premises and in the cloud, with the latter becoming more and more prevalent lately. Here are the different kinds of data pipelines:

Batch processing data pipelines

ETL batch processing pipelines process data in large volumes at scheduled intervals. They are ideal for handling historical data analysis, offline reporting, and batch-oriented tasks.

Streaming data pipelines

Also called real-time data pipelines as well as event-driven pipelines, these pipelines process data in real-time or near real-time, that is with very low latency. They are designed to ingest and move data from streaming data sources, such as sensors, logs, or social media feeds. streaming data pipelines enable immediate analysis and response to emerging trends, anomalies, or events, making them critical for applications like fraud detection, real-time analytics, and monitoring systems.

Data integration pipelines

Data integration is an automated process that moves data from various sources, transforms it into a usable format, and delivers it to a target location for further analysis or use. Data integration pipelines can be further categorized depending on whether the data is transformed before or after being loaded into a data warehouse.

ETL Pipelines

ETL pipelines are widely used for data integration and data warehousing. They involve extracting data from various sources, transforming it into a consistent format, and loading it into a target system. ETL pipelines are typically batch-oriented but can be augmented with real-time components for more dynamic data processing.

ELT Pipelines

Extract, load, and transform (ELT) pipelines are similar to ETL pipelines, but with a different sequence of steps. In ELT, data is first loaded into a target system and then transformed using the processing power and capabilities of the target system to transform data.

Data pipeline vs. ETL pipeline

Given the similarities between a data pipeline and ETL, it’s fairly common to come across the question “what is an ETL data pipeline?” Data pipelines and ETL are closely related; in fact, a data pipeline is a broader concept that includes ETL pipeline as a sub-category. However, there are some fundamental differences between the two:

While a data pipeline doesn’t always involve data transformation, it’s a requisite step in an ETL data pipeline. Additionally, ETL pipelines generally move data via batch processing, while data pipelines also support data movement via streaming.

Data pipeline

- Data Movement and Integration: Data pipelines are primarily focused on moving data from one system to another and integrating data from various sources. They enable the efficient and real-time transfer of data between systems or services.

- Flexibility: They can be more flexible and versatile compared to ETL processes. They are often used for real-time data streaming, batch processing, or both, depending on the use case.

- Streaming Data: Data pipelines are well-suited for handling streaming data, such as data generated continuously from IoT devices, social media, or web applications.

- Use Cases: Common use cases for data pipelines include log and event processing, real-time analytics, data replication, and data synchronization.

ETL pipeline

- Structured Process: ETL processes follow a structured sequence of tasks: data extraction from source systems, data transformation to meet business requirements, and data loading into a target repository (often a data warehouse).

- Batch Processing: ETL processes are typically designed for batch processing, where data is collected over a period (e.g., daily or hourly) and transformed before it is loaded into the target system.

- Complex Transformations: ETL is the right choice in case you need to perform complex data transformations, such as aggregations, data cleansing, and data enrichment.

- Data Warehousing: You should opt for ETL processes when you need to consolidate data from multiple sources and transform it to support business intelligence and reporting.

- Historical Analysis: ETL processes are suitable for historical data analysis and reporting, where data is stored in a structured format, optimized for querying and analysis.

Commonalities:

- Data Transformation: Both data pipelines and ETL processes involve data transformation, but the complexity and timing of these transformations differ.

- Data Quality: Ensuring data quality is important in both data pipelines and ETL processes.

- Monitoring and Logging: Both require monitoring and logging capabilities to track data movement, transformation, and errors.

Read more about data pipeline vs. ETL pipeline.

Building a data pipeline

Building an efficient system for consolidating data requires careful planning and setup. There are typically six main stages in the process:

- Identifying Data Sources: The first step is to identify and understand the data sources. These could be databases, APIs, files, data lakes, external services, or IoT devices. Determine the format, structure, and location of the data.

- Data Integration: Extract and combine data from the identified sources using data connectors. This may involve querying databases, fetching data from APIs, reading files, or capturing streaming data.

- Data Transformation: After extracting data, transform and cleanse it to ensure its quality and consistency. Data transformation involves tasks such as data cleaning, filtering, aggregating, merging, and enriching. This stage ensures that the data is in the desired format and structure for analysis and consumption.

- Data Loading: After transforming, load the data into the target system or repository for storage, analysis, or further processing. During the loading stage, the pipelines transfer the transformed data to data warehouses, data lakes, or other storage solutions. This enables end-users or downstream applications to access and utilize the data effectively.

- Automation and Scheduling: Set up automation and scheduling mechanisms to execute the data pipeline at regular intervals or in response to specific events. Automation minimizes manual intervention and ensures data is always up-to-date.

- Monitoring and Evaluating: Implement robust data pipeline monitoring and metrics to track the health and performance of the data architecture. Set up alerts to notify you of issues or anomalies that require attention. This stage helps optimize your data pipelines to ensure maximum efficiency in moving data.

Read more about building a data pipeline.

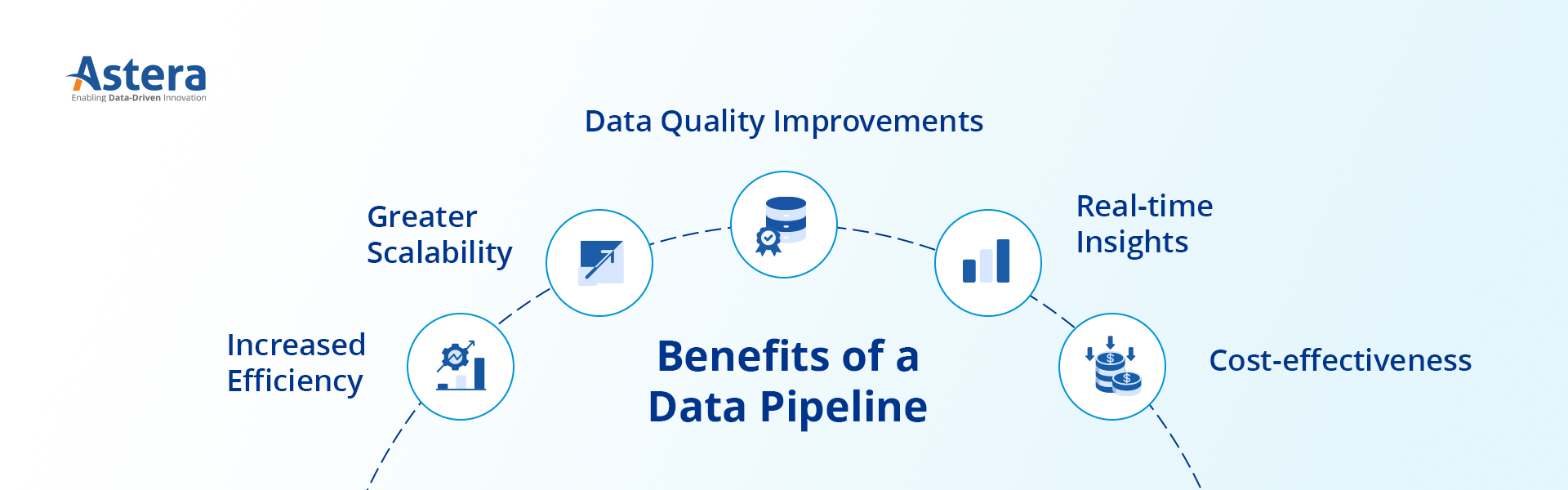

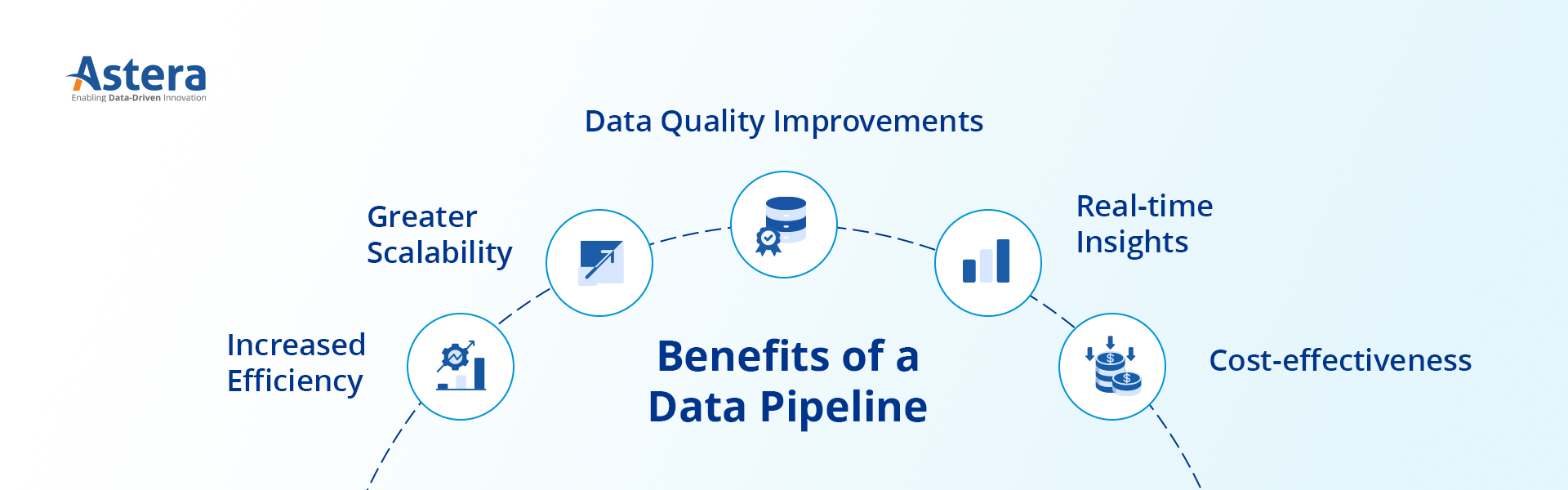

Benefits of a data pipeline

Automated data pipelines combine data from different sources and are essential for the smooth and reliable management of data throughout its lifecycle. Here are some benefits of data pipelines:

-

Increased efficiency

Data pipelines automate data workflows, reduce manual effort, and increase overall efficiency in data processing. For instance, they can extract data from various sources like online sales, in-store sales, and customer feedback. They can then transform that data into a unified format and load it into a data warehouse. This ensures a seamless and error-free conversion of raw data into actionable insights, helping the business understand customer behavior and preferences better.

-

Promoting data governance

Data pipelines ensure that data is handled in a way that complies with internal policies and external regulations. For example, in insurance, data pipelines manage sensitive policyholder data during claim processing. They ensure compliance with regulations like the European Union’s General Data Protection Regulation (GDPR), safeguarding data and building trust with policyholders.

-

Greater scalability

They can handle large volumes of data, allowing organizations to scale their operations as their data needs grow. By adopting a scalable architecture, businesses can accommodate increasing data demands without compromising performance.

-

Data quality improvements

Through data cleansing and transformation processes, they enhance data quality and ensure accuracy for analysis and decision-making. By maintaining high data quality standards, organizations can rely on trustworthy insights to drive their business activities.

-

Real-time insights

Real-time data enables organizations to receive up-to-date information for immediate action. Modern data pipelines are capable of delivering data for analysis as it is generated. By leveraging timely data insights, businesses can make agile and proactive decisions, gaining a competitive advantage in dynamic market conditions.

For example, in the ride-sharing industry, they enable swift processing of data to match drivers with riders, optimize routes, and calculate fares. They also facilitate dynamic pricing, where fares can be adjusted in real-time based on factors like demand, traffic, and weather conditions, thereby enhancing operational efficiency.

-

Cost-effectiveness

They optimize resource utilization, minimizing costs associated with manual data handling and processing. By reducing the time and effort required for data operations, organizations can allocate resources efficiently and achieve cost-effectiveness.

Data pipeline use cases

Data pipelines serve a multitude of purposes across industries, empowering organizations with timely insights and data-driven decision-making. They are utilized in numerous industries to enhance the efficiency of data flow within organizations.

For instance, in the finance sector, they help integrate stock prices and transaction records, enabling financial institutions to enhance risk management, detect fraud, and ensure regulatory compliance.

In the healthcare industry, pipelines integrate electronic health records and lab results, contributing to improved patient monitoring, population health management, and clinical research.

In the retail and e-commerce sector, they integrate customer data from e-commerce platforms and point-of-sale systems, allowing for effective inventory management, customer segmentation, and personalized marketing strategies.

Some more data pipeline use cases:

-

Real-time analytics

Data pipelines enable organizations to collect, process, and analyze data in real time. By harnessing the power of real-time analytics, businesses can make timely decisions, react swiftly to market changes, and gain a competitive edge.

-

Data integration

Data pipelines consolidate data using data connectors from various sources, including databases, APIs, and third-party platforms, into a unified format for analysis and reporting. This integration allows organizations to harness the full potential of their data assets and obtain a holistic view of their operations.

-

Data migration

They facilitate smooth and efficient data migration from legacy systems to modern infrastructure. By ensuring a seamless transition without disruption, organizations can leverage advanced technologies and drive innovation.

-

Machine learning and AI

They provide a seamless flow of data for training machine learning models. This enables organizations to develop predictive analytics, automate processes, and unlock the power of artificial intelligence to drive their business forward.

-

Business intelligence

Data pipelines support the extraction and transformation of data to generate meaningful insights. By harnessing the power of business intelligence, organizations can make data-driven decisions, identify trends, and devise effective strategies.

Working with data pipeline tools

Building data pipelines manually is time-consuming and prone to errors. For example, organizations that use Python to build data pipelines realize that managing pipelines quickly becomes a challenging endeavor as data sources and complexity grow. Instead of investing more in building a bigger team of developers, a more cost-effective and sustainable strategy would be to incorporate a modern data pipeline solution into the data stack.

Data pipeline tools make it easier to build data pipelines as they offer a visual interface. However, choosing the right tool is a critical decision, given their widespread availability and the fact that no two solutions are built equal. The right tool will be the one that provides connectivity to a wide range of databases, APIs, cloud destinations, etc. It also provides support for near real-time data integration via ETL, ELT, and change data capture. It is scalable and handles growing data volumes and concurrent users with ease.

For example, Astera is a no-code data management solution that enables you to build enterprise-grade data pipelines within minutes. It allows you to create and schedule ETL and ELT pipelines within a simple, drag and drop interface. Astera supports seamless connectivity to industry-leading databases, data warehouses, and data lakes with its vast library of native connectors. Additionally, you can automate all dataflows and workflows and monitor data movement in real-time. Business users can take advantage of advanced built-in data transformations, data quality features, version control, and data governance and security features and build data pipelines on their own.

Emerging trends surrounding data pipelines

Beyond the common use cases, data pipelines have applications in various advanced scenarios and emerging trends:

- Real-time Personalization: Data pipelines enable real-time personalization by analyzing user behavior data and delivering personalized content or recommendations in real time.

- Internet of Things (IoT) Data Processing: With the rise of IoT devices, data pipelines are used to ingest, process, and analyze massive amounts of sensor data generated by IoT devices, enabling real-time insights and automation.

- Data Mesh: The data mesh concept decentralizes them and establishes domain-oriented, self-serve data infrastructure. It promotes data ownership, autonomy, and easy access to data, leading to improved scalability and agility in data processing.

- Federated Learning: They support federated learning approaches, where machine learning models are trained collaboratively on distributed data sources while maintaining data privacy and security.

- Explainable AI: They can incorporate techniques for generating explainable AI models, providing transparency and interpretability in complex machine learning models.

Conclusion

Data pipelines play a vital role in the modern data landscape, facilitating efficient data processing, integration, and analysis. By leveraging the power of an automated data pipeline builder, you can enhance decision-making, improve operational efficiency, and gain valuable insights from their data. Data integration tools like Astera simplify the creation of end-to-end dataflows. Ready to build and deploy high-performing data pipelines in minutes? Download a 14-day free trial to get a test run or contact us.

Authors:

Astera Analytics Team

Astera Analytics Team